- Node Mode

- Posts

- Exploring The Future of Software

Exploring The Future of Software

How New AI Should Transform Software Organizations, Not Just Code.

↓ Level 1: AI has settled nicely into its role as a code helper, but there are real barriers preventing it from taking over software development entirely

↓ Level 2: The biggest obstacle isn't generating new code—it's maintaining the complex systems we've already built

↓ Level 3: Once this maintenance problem is solved, we'll see AI transform not just our software, but the entire organizational structure around it

I know I'm making a bold claim with that headline. But in this piece, I want to share some predictions about the future based on both the courage my ignorance in software gives me and the experience I've gained over the last 4 years learning programming and building my own applications across the pre-LLM and post-LLM eras. I'm also drawing on my years of design and product management experience working alongside developers on everything from games to SaaS development. What I've noticed when discussing software's future is that what will truly shape it isn't just AI's ability to write code, but rather how it can maintain code and how organizations will restructure around this capability. While Conway silently watches us from afar, let's try to predict the future.

So to begin with: What did I discover as a designer who decided to learn programming and dive into coding?

I. Programmers are designers too, and usability matters.

Design patterns mean something entirely different to designers versus programmers. For programmers, having a well-designed code structure is crucial - it allows people to navigate increasingly complex codebases without getting lost, making everything more usable, readable, and discoverable. Learning to program is also learning to continuously interact with a code structure. To explain this to designers who haven't yet gotten their hands dirty with serious code, it's somewhat similar to atomic design principles or design systems. Programmers consider high-quality code to be reusable, dependent on variables with proper scope rather than magic numbers or functions, following systematic patterns, and intuitive enough that someone looking at it for the first time can understand what's happening. You could even call it the user experience of written code.

II. My Pre-LLM Period - Working With a Human Mentor

I started learning Swift in 2021, and I consider myself lucky to have started coding at exactly the right time. Had I begun in the post-LLM era, I would have jumped straight to solutions without taking time to understand the fundamental concepts. I wouldn't have developed such a clear perspective on what an LLM adds and takes away as a coding assistant. For context - before I started writing code line by line, I had already absorbed programming logic through Unreal Engine's designer-friendly Blueprint visual coding system while experimenting with games. I had even gotten as far as creating procedural game maps using just blueprints. But as a designer, the thought of writing code line by line always intimidated me.

In the pre-LLM period, I learned Swift by watching countless hours of online courses and tutorial videos. When I started building my own app and hit roadblocks, I'd revisit relevant sections of tutorials, consult my notes, or search Stack Overflow. But when working on my own application, I eventually hit serious walls where internet resources just weren't enough. I needed a human. I researched online coding mentor programs and hired an advanced Swift programmer. To better illustrate the contrast with the post-LLM period, let me share my interaction with this expert-level programmer whom I paid $25 for just 15 minutes of his time.

We hopped on a video call. He asked what I was trying to accomplish and where I was stuck.

I briefly explained my app, its purpose, and my specific roadblock. I mentioned I was a beginner programmer.

He remotely connected to XCode (Apple's iOS development environment).

He navigated through my codebase to understand my approach (watching him work in real-time was fascinating).

When I asked, he mentioned that for a beginner, I had designed the code structure quite well.

He then made several attempts to solve the problem.

We ran tests, and after about 20-25 minutes, it worked.

He left me with some to-dos, and we ended the call.

We had a couple more sessions over the following days, totaling nearly an hour of support. Though this help was seriously expensive, I was grateful it existed, and thanks to it, I completed my app.

III. Post-LLM Era

It's November 2022, a year later of my first app launch. I was sipping my morning coffee at may fav co-working space. I heard excited chatter from every table and could tell something was happening. Trying to understand the buzz, I caught the word "ChatGPT..." I immediately googled "TechCrunch + ChatGPT" and discovered that company called OpenAI had released a chattable AI. Had that moment finally arrived? I'll spare you the details of my first experiments asking about the meaning of life and other classics everyone tried.

Fast forward to March 2025. Two and a half years after launch, while the chat interface and basic text generation have gained mass adoption, it hasn't yet delivered the transformative impact for businesses that many expected beyond defining AI-enhanced functions. Some dismiss it as "just a word prediction engine," while others insist "the future is here, but most people just don't get it yet."

Back to my experience. Naturally, I immediately migrated all my coding experiments to LLM. Last year, I switched to Anthropic's Claude model, primarily because it could provide longer, uninterrupted code outputs. As they've refined Claude, I've been amazed at how quickly it grasps what I'm trying to accomplish. Often when explaining something to a human programmer, I needed to provide far more words, more context, and describe certain functions with painful precision.

Consequently, Claude became my code mentor + senior developer + junior developer + coding buddy and partner rolled into one. And that's just the coding aspect. It's also my editor, writer, health advisor, research assistant, social media writer, and more - all for just $20 monthly. So let's put aside debates about what AI is and how we use it, and simply acknowledge that we're getting an extraordinary service for $20 that represents a genuine paradigm shift. Let's process the striking reality that the dozens of different freelance services I previously paid for through Upwork and Fiverr have dropped to zero. Literally zero.

Though the timeframe is still short, there are speculative discussions and data points on this transition. Threads on Reddit claim that freelancer site traffic has been trending downward since November 2022, and Stack Overflow's traffic has declined significantly. Looking at my own Stack Overflow profile (You can check yours too here), I realized I haven't used the site since January 2023. The same applies to freelancer platforms.

IV. LLM Adoption Gap and Human Agency Problems

Let's cross-check some figures: Today, 63% of professional programmers have incorporated AI into their workflows, with another 14% planning to do so. Tools like GitHub Copilot, Cursor and Tabnine assist developers with code writing, refactoring, and documentation. The AI coding market is projected to grow by 25% by 2030. As someone who's coming from a design background, I want to identify the gaps, resistance points, and personas I've observed in developer and non-developer AI adoption.

Over the past year, when speaking to my non-developer professional friends who claim they regularly use AI, I've found that 95% are simply using ChatGPT's web interface for basic text generation. Based entirely on my limited sample (which is surely biased), I'd estimate only about 5% have paid memberships (published figures show 2.5% of ChatGPT's total user base). When I hear them describe their usage patterns, it's clear that most users engage with LLMs at only a surface level. Typically, when I explain my workflow or mention Claude or Perplexity, I get responses like "I should check that out" or "wow, how do you do that?" They're often unaware that much of their work could be done faster and more systematically with an LLM. The reason is simple: getting to that level requires time and effort. I'm not talking about simply uploading an Excel file and requesting a summary. I mean advanced AI work: developing sophisticated prompt systems, integrating AI into projects, and creating automations that generate reliable outputs. This explains why AI wrappers find customers and will continue to do so. I believe there's a 90% gap between advanced and basic AI usage, with only 10% using these tools at an advanced level.

Now, let's get back to developers. There's a significant divide in the developer community. On one side are those claiming, "I launched my app with zero coding knowledge and now make $100k MRR monthly," and on the other, those saying, "Sure, I use AI, but I'd never trust this word prediction engine to write substantial boilerplate code," who refuse to relinquish human oversight. Setting aside the first group, let me share what I've observed while working with developers.

As a newcomer to programming, I work much more comfortably and boldly with LLMs, sometimes with detailed, structured prompts and other times with brief queries in natural language. However, programmers with traditional training who develop and maintain code daily don't show this confidence. They have legitimate concerns. Since they (humans) handle code development and maintenance, code quality remains paramount. According to GitClear's analysis, there's been a fourfold increase in recurring code blocks across 211 million lines of code over the past year. IBM research shows that 75% of developers need to edit AI-generated code before using it. For these reasons, they don't trust LLMs even when they probably could, preferring to edit code themselves. But here's the reality: I've been using these tools almost continuously for the last two and a half years, and they're constantly improving. Something an LLM couldn't do last month might be well within its capabilities today. We often don't recognize these improvements. But ultimately, for reasons that include some valid concerns, professional developers are reluctant to relinquish control and continue solving problems the old-fashioned way. Even when they do use LLMs, they still approach coding traditionally.

V. What Does Conway's Law Tell Us?

Consider all the digital products in use today. We're talking about millions of applications and trillions of lines of code. We can assume that any software that's been around for a while carries considerable technical debt. We can imagine software belonging to a team larger than one person or to a company contains several levels of legacy code living together from history to the present. The perfect example is the different settings screens coexisting simultaneously in the Windows operating system

Huh? Remembering this window from 98?

You switched to a new Windows version with great excitement. Wow! The interface is very sleek. While adjusting settings, suddenly a nearly Windows 98-era settings panel pops up from below. Or you're a developer wanting to connect an application you frequently use to your own process using its API. You're trying to understand the documentation of this otherwise well-functioning application's API connection that never existed—if you can even find it! All of these issues stem from Conway's Law, which developers attending DevOps conferences might be tired of hearing about. Named after Melvin Conway in 1967, this law states that an organization's internal structure, the communication patterns between people and teams, directly determines the shape of the software produced as output. In his own words:

"Organizations which design systems are constrained to produce designs which are copies of the communication structures of these organizations." - Conway.

So when we look at the new version of Windows, we can visualize the different parts of the team working on the new settings screen, how the audio interface team differs from the network team, these teams having meetings and making decisions about the levels of keeping old codes and UIs, saying "let's change it up to one level of depth, bro, most users don't go beyond a certain point anyway," among them some who have been working in the Windows Audio Interface team for 20 years saying "we had a solution two versions ago, we can use it here," and occasionally being asked about problems that arise when merging. The entire DevOps movement basically emerged because people got tired of yelling 'Who broke the build?!' across cubicles.

Why am I telling you all this? Because as we'll see, for AI, specifically LLMs, to take jobs from programmers, they must offer a solution to this massive maintenance problem. From the day a solution is provided, we can imagine transitioning to a different organizational model becomes possible. I don't think this is possible in the current fully-adapted code-helper LLM paradigm. I don't know the solution as I'm not an advanced programmer or LLM expert. But perhaps, like the innovation brought by transformers, a brand new model built on existing models could solve this.

Multiple questions about the future of software arise:

Will it be possible in the near future to maintain all this living software in any way - perhaps by a new generation AI?

Will the development of LLMs, the change of legacy code, and the change in mindset of programmers living in today's traditional software paradigm depend on a human generational shift?

Is there a possibility that we'll be working with code that isn't human-readable in the future?

In this model, what kind of organizational structure might companies adopt, and what kind of software would we end up with?

VI. Let's make some wild guesses.

When starting this article, I thought some more questions and decided to leave them for a follow-up piece: What level will our interaction with computers be in the future? What will our interaction interface be? Where will we leave human agency (Airplane autopilots and self-driving cars)? Can a doctor's decision be completely left to artificial intelligence? What level of control will they maintain? Will they always need a dashboard that can drill down to the raw data level when necessary? All these questions are for the next article.

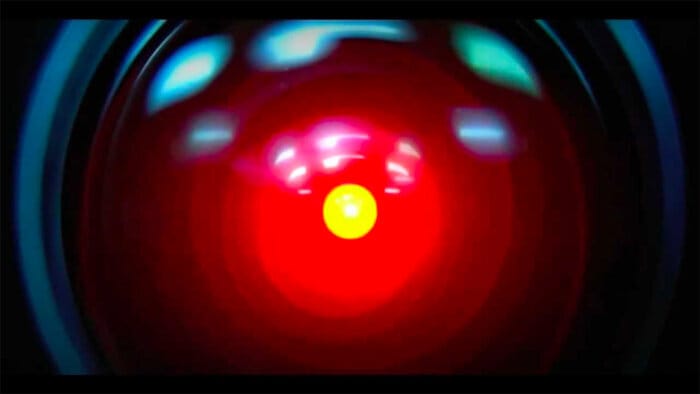

Your merge request is not acceppted Dave.

Looking at the future of software, we can see that we'll still need product managers, designers, and programmers in the future. However, the structure of the product development team might be different. I imagine a central system that's a more advanced AI than today's. Knowing that every junior who joins the company asks a senior developer who has been with the company for many years how to write code that would not breake the legacy code, I imagine an AI taking on the same role and becoming an infinite, simultaneously accessible main developer unit and the code itself. I imagine a new team structure in this company that knows coding but doesn't code, communicating and interacting with AI instead. I'm talking about the mothership model or HAL 9000 that we're used to seeing in science fiction. Looking from 1968 when the great master Arthur C. Clarke wrote and the other great master Kubrick directed 2001: A Space Odyssey (interestingly one year after Conway's definition), AI was pictured as the (flawed) central unit in the year 2001. What was the structure of the team that developed HAL, I wonder? Which version of HAL are we on in the film? Yes name suggests 9000 but i mean v9.01.234? Do they have merge conflicts when releasing new versions, or does HAL merge new code by itself?

At the beginning of the article and in different places, I specifically mentioned code quality and human readability. Because the point I wanted to reach was to ask whether a time will come when we leave human readability behind. Could we possibly be working on software where the language is purely understood by the machine, we interact with it through interfaces it presents to us, when it breaks down (which will be very rare) we call in high-level human experts like calling a service when the washing machine breaks? I liken this to a very advanced kind of Unreal Engine Blueprint (A similar creative canvas product for creatives was launched recently). All AI developers are connected to this central unit. The AI provides us with readable code snippets when necessary so that we can understand how it writes, but in fact, there is a code base behind it that a programmer today might define as nonsense if they looked at it.

Some argue this is neither possible nor desirable, suggesting that black box code could cause us trouble just like in the HAL example. But this article isn't about the ethics of what should or shouldn't happen—it's a brainstorm about where real adoption might take us. And it's written to spark a conversation about what structure we'll be working in when we think about our professions in the future.

Tiny Challenge

If you haven't done so already, go to Perplexity now, select Reasoning - R1 as the model, and ask "In light of recent developments, which direction do you predict Tesla shares will go?" And watch what it does in real time.

Bright Minds: Donald Knuth

Born on January 10, 1938, in Milwaukee, Donald Knuth is known as the "father of the analysis of algorithms". Knuth is an advocate of "literate programming," blending code with comprehensive documentation. He created the WEB and CWEB systems to support this approach. He famously stopped using email in 1990 to focus on his projects and prefers written correspondence instead.

Time Capsule

The launch of Windows 95 on August 24, 1995, was accompanied by one of the biggest marketing campaigns in tech history. Microsoft licensed the Rolling Stones' song Start Me Up for its ads, tying it to the introduction of the iconic Start button. The Start menu has undergone five major redesigns since its debut in Windows 95, including its removal in Windows 8 and subsequent return in Windows 10. Each redesign reflects shifts in user needs, hardware capabilities, and design trends over time.

Node Mode [Off][ ]

Peace,

Aydıncan.

Reply