- Node Mode

- Posts

- etaoin shrdlu

etaoin shrdlu

Or how we will remember "Hello World"

Hey everyone 👨🎨

I've been traveling a lot this past month, which means no new posts. But I think this one might make up for it.

Enjoy!

↓ Level 1: "Hello World" marks the end of an era where humans had to learn machine language to make computers work.

↓ Level 2: For 47 years, we developed advanced cognitive skills by translating our intent into machine instructions.

↓ Level 3: We're the last generation fluent in both human and machine languages. The next generation will only speak theirs, and computers will speak ours.

It's June 1978. In the early morning hours, there's a melancholic rush in the basement of The New York Times. The newspaper is being prepared for print using mechanical typesetting for the last time. From the next day forward, articles written on computers and digital typesetting will compose the newspaper's pages.

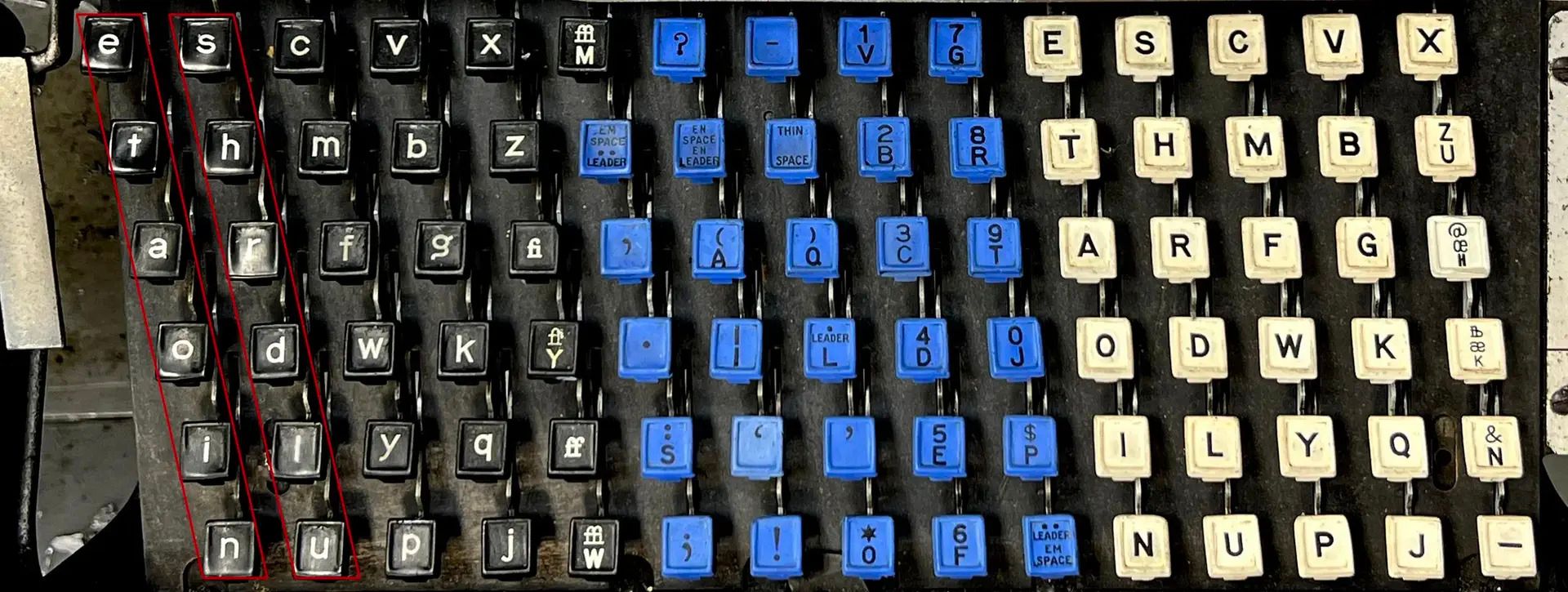

Until that day, every article on an entire newspaper page was manually set on a linotype machine. When there was an error in a line, since correcting individual characters was very time consuming, the operators found it easier to remove the erroneous line from the mold entirely and fill the whole line with random letters. These seemingly random letters are the first two rows of the linotype keyboard from top to bottom, "etaoin shrdlu," marking the erroneous line to be removed later. It was the mechanical equivalent of the QWERTY12345 combination we see in most passwords today.

The linotype keyboard. When a line had errors, operators would quickly run their fingers down the top two rows.

This often resulted in etaoin shrdlu appearing in print. So much so that this letter sequence even made it into the Oxford Dictionary in later years. If you want to get a sense of the atmosphere of that day, I'm leaving here a short documentary link.

1978 is also the year I was born. The late 70s were years when the transition to computerization was as sharp as the example above. In a very short time, the computerization would show itself in every field from cinema to music, from design to fashion. However, the real significant difference in computerization emerged with personal computers (PC). I remember very clearly because the era coincided with my teenage years. From the late 80s onward computerization first began to be seen in companies as "computer rooms”, then completed its process with everyone having a personal computer at their desk. I remember our parents, or my father's colleagues bragging about having done things with computers. "I did the whole balance sheet on the computer, just hit print, boom it gives me the whole month," or "Look, I can make the title a different font and bigger if I want, we're doing our end-of-month reports on the computer now."

We started seeing this bragging in their retirement years with smartphones and the internet. "I log into the bank from my phone, boom, send it. My friends are watching, wondering how I did it. They're still walking to the bank!" I don't know if this sounds familiar, but these days I see similar bragging in my own age group (let's say 35-55): "I told ChatGPT to summarize it, boom, it came right out" or "I asked how to write it, ChatGPT produced it immediately!”

There are two reasons behind this bragging. One is obviously catching up with technology, not falling behind peers, or even if we dig deeper, actually staying young and all that. The other reason is what interests me. All of this feels magical. A computer understanding the topic with a simple request, picking up the pen (and with it, the agency), and writing lines of text. It's an incredibly magical thing.

A Bubble? A Bird? A Plane?

As bubble noises rise around AI, I keep thinking. Does it resemble the ".com" bubble? Or is it something like the invention of the digital calculator? Metaverse? VR? Blockchain? I recently realized it's none of these things, through a book I've been reading the past month. "Make Something Wonderful," is a book compiled by Steve Jobs's wife and colleagues, consisting of his email exchanges, speeches, and interviews (you can download it here for free). And it is truly inspirational.

I want to share a quote from a speech he gave at the Aspen International Design Conference in June 1983, without touching it:

“Computers are really dumb. They're exceptionally simple, but they're really fast. The raw instructions that we have to feed these little microprocessors—or even these giant Cray-1 supercomputers—are the most trivial of instructions. They get some data from there, get a number from here, add two numbers together, and test to see if it's bigger than zero. It's the most mundane thing you could ever imagine.

But here's the key thing: let's say I could move a hundred times faster than anyone in here. In the blink of your eye, I could run out there, grab a bouquet of fresh spring flowers, run back in here, and snap my fingers. You would all think I was a magician. And yet I would basically be doing a series of really simple instructions: running out there, grabbing some flowers, running back, snapping my fingers. But I could just do them so fast that you would think that there was something magical going on. And it's the exact same way with a computer. It can do about a million instructions per second. And so we tend to think there's something magical going on, when in reality, it's just a series of simple instructions."

In the continuation of this and many other speeches, when mentioning the necessity of personal computers entering homes, Jobs mentions that people don't want to learn programming, they want to get their work done faster and easier. He talks about an elementary school child doing their homework with a paint program or a mother creating shopping lists and household budgets on a computer.

Jobs says this magic comes from speed. Today, people are experiencing similar magical feelings with AI tools, but in a different way and on a different level. Artificial intelligence isn't smart or anything, computers are still dumb. At their core, there are still ones and zeros. The difference is that they're no longer bound to instructions and have a kind of interpretive ability. You know that famous video of a dad having his kids make a peanut butter and jelly sandwich that beautifully illustrates how algorithms work (the video is from 2017, by the way). Well, for the average user (the kids), computers no longer work like that if a peanut butter sandwich is what you need. When you say “make me a peanut butter sandwich”, it can make one on the first try. Because just like we've learned since infancy, the computer has also learned what a peanut butter sandwich looks like. I'm leaving the question of whether this is intelligence to another article.

Ultimately, the computer magically understands what we're saying through what it's been taught and prediction methods, and produces outputs that are largely meaningful to us. On top of this, when it offers chat and conversation with anthropomorphic behavior, everything becomes even more magical. People aren't interested in how neural networks work or how transformers consumed data. They want to easily send social media posts, do their homework, have emails they can't bring themselves to write be written automatically.

For now, I think the AI transformation is none of what I asked at the beginning of this section. Before getting to what it is, let's answer this question since it's on the agenda: Is it a bubble? From the financial world's perspective, most likely yes. But when the ".com" bubble burst, we didn't stop using .coms. That period actually kicked off the internet. I'm not even comparing it with examples like Metaverse or blockchain because none of them solved actual needs all at once from day one.

While reading Jobs's talks, I realized this transformation more closely resembles computerization of society, but on a whole different level.

"Hello World"

main( ) {

printf("hello, world");

}I believe this phrase, just like etaoin shrdlu defining the typesetting era, best describes the computerization age. Though it's thought to have existed earlier, “Hello World" became popular among programmers after appearing in "The C Programming Language" book—believe it or not, published in 1978—, describing the very human interaction with a computer. "Hello World" is the first signal that a programmer has begun speaking a computer language. It's also the result of a person learning to think and behave like a computer. Two simple words from the natural English language, squeezed between computer language. Two words that, when bound together, symbolize a birth, a neogenesis. Perhaps it is the digital birth of the human person writing the instructions.

On the other hand, while we were saying hello to the digital world by speaking its language, we always wanted the computer to understand what we said, to speak like us. The programming languages we developed as a layer above ones and zeros were already trending toward "Englishification" over the years. Languages that started with Assembly evolved to C, which gave birth to "Hello World," then to the even more English-like Python and even Swift (I'm listing these in order purely for the evolution of Englishification). The readability and comprehensibility of code, its ease of learning and writing was important. We call the people who can speak computer language “programmers”. Despite all this effort, computers never understood us or vice versa.

If I remember correctly, I got a Commodore 64C in 1987 and started learning the Basic programming language immediately. Years later, when I returned to programming, I realized this: "Hello World" actually taught us not just how to do things on a computer, but cause-and-effect relationships, solving root problems, sequential or systemic thinking, breaking down intent into atomic parts. I feel very lucky to have studied and learned programming again in 2017, before LLMs. If I were a very young builder today, I would never write "Hello World."

After my experiences with programming tools in recent weeks, I can see that this thinking system will soon disappear. Today, when instead of me saying "Hello World," the computer asks me "Hello Aydıncan, what do you want to do?"—A 47-year transformation has been completed and a new era has begun.

Believe it or not, I came across a comment on a Reddit thread where someone who lived through the mechanical typesetting era lamented how computerized publishing led to work being done with less thought about the craft and details. Well, it's a never-ending cycle. We're in a transformation, and for centuries without exception, every generation complains that the new generation has lost certain human qualities compared to them. Therefore, these observations of mine aren't a lament or nostalgia for the past. I understand the muscle memory of linotype, I've written "Hello World" many times, and now I can develop applications I envision through natural language. Perhaps there's no loss and we're just continuing the journey. But someday in the future, when someone asks how computers think, no one will be able to answer and a long historical research will be necessary. To understand the nature of that day, they'll also watch an old documentary from 2025 titled "Hello World."

Node Mode [Off][ ]

Peace,

Aydıncan.

Reply