- Node Mode

- Posts

- Brain First > AI Next

Brain First > AI Next

AI Strategies for 2026

In this second piece of my yearly reflections and trend analysis for 2026 and beyond, I’ll be evaluating how we might build a co-working strategy with AI, looking beyond the general hype (whether it's bubble-hype or AI-hype).

↓ Level 1: Most people use AI as a smarter Google

↓ Level 2: In the future, the real gap will form between those who think first and those who delegate thinking

↓ Level 3: Protecting your cognitive autonomy is the new literacy

What about the Young?

A few weeks ago, I organized and presented a series of AI seminars for undergraduate design students. Since I'd be facing fresher minds, I assumed they'd be absolute beasts who would drag me into the depths of AI and challenge me, so I studied twice as hard as I normally would. In the seminars, I first explained how these softwares work to establish basic AI fluency, then covered how ChatGPT and similar LLMs are unreliable when it comes to information, the context window and the importance of context, and why using our brains and good old Google search is still more effective. Since I ran the sessions in an interactive format, I can say that the students' ChatGPT usage patterns largely matched the findings from OpenAI's own recent research.

ChatGPT is not all you need

Since I collected group responses by show of hands and knowledge-based answers through the highly scientific “a chocolate bar for a correct answer" method, I believe I can confidently discuss my half-observation, half-data-based results (let's say roughly 100 students participated in total). 99% of students use only ChatGPT and, mostly are unaware that other LLMs exist. The second most recognized tool after ChatGPT is Gemini. I think this is due to Gemini's rising performance recently, plus their traditional advertising campaigns. In Türkiye, they even sponsored chef reality show during prime time TV to boost trial numbers. Data also confirms Gemini’s rising.

Students know Gemini is owned by Google, but only 2 people knew which company owns ChatGPT. Since the participants were design students, they're aware of and regularly use generative image tools like Midjourney or Canva AI.

Nobody knows what abbreviations like "LLM," "GPT" stand for, or what "Agentic AI" is. I'd estimate that at most 5% bother with writing effective prompts. But still, assuming some students were shy about responding, let's add about 10% to all results and move on to the analysis.

Worldwide, ChatGPT has entered students' lives as a homework hack tool. The curious ones who would have spent time researching anyway are now doing deeper research with LLMs. On the other hand, students already inclined to take shortcuts have been pushed toward even more laziness by AI. Beyond homework, other uses include general guidance and accessing information. In short, just like the research we'll examine below, students display the same usage patterns as all ChatGPT users. Should students be knowledgeable about AI? Yes. Just like media literacy, AI fluency will help them develop healthier judgments. Otherwise, a Sam Altman-induced perception of reality looks quite dangerous.

A Smarter Google For Most of Us

The results of OpenAI researchers' recently shared study "How people are using ChatGPT" support the picture above. Rounding the numbers without getting lost in details, roughly 55% of approximately 800 million ChatGPT users use the chatbot for general guidance. Beyond that, writing takes the largest share at 23.9%. Looking at the total, we can conclude that 77% of AI users are non-technical, using it for general daily tasks, information search, and writing.

Based on my own observations from my circle, I can say there's a huge gap in usage depth between an AI-fluent user and an ordinary ChatGPT user. Especially those who develop products. However, I want to underline two points.

First, this doesn't mean fluent users have figured everything out and are working in perfect efficiency like clockwork. On the contrary, building an efficient and more importantly reliable system with AI requires a tremendous initial setup effort. Contrary to what people think, nobody has really figured anything out. Everyone is trying to get somewhere through hundreds of trial and error attempts.

The second point is that if you think you're left behind, or if someone is implying so, they're completely wrong. With some focused time on the subject, you can close the entire gap in as little as 3-5 days and draw yourself an AI-inclusive roadmap.

So here are 6 trends that I think will matter next year and beyond.

1. Brain First > AI Next

Since we opened the piece with students, let's stay with them and discuss the “Your Brain on ChatGPT” research MIT recently published examining the effects of LLM usage on students’ cognitive activities. Researchers asked 54 students to write an essay and divided them into 3 groups:

Brain-only group: They would write using only their brains without any external tools.

Search engine allowed: They could get help from search engines.

LLM group: They could use LLMs as much as they wanted.

According to the brain activity monitored (EEG) during writing for all participants, the LLM-only group showed quite stagnant cognitive movement during work. We could say the neurons weren't firing at all. Meanwhile, search engine users showed slightly more brain activity, and naturally, the brain-only group had the most neuron firing.

Looking at the essays written, the results get interesting. LLM users' topics were quite similar and touched on the same points (classic shallow LLM output), while search engine users' essays managed to touch on different points. Again, the brain-only group produced the most diverse concepts and bolder perspectives. You can review all the details of the research here, which also evaluated recalling and owning the opinions. All the results are as you'd expect. But here is the part where things got interesting.

Researchers conducted one final test on the groups, by switching their tools and telling them they could improve their essays. While the already LLM-only group's brains showed even less activity, when the brain-only group started working with LLM, their neurons were observed going absolutely wild. So we can conclude that the brain first > AI next usage pattern achieves the richest results in terms of cognitive activity and output.

A Human-centric Future

I remember first time seeing the images generated by AI a few years ago, I was deeply impressed, and concerned at the same time. How we would evaluate creative works in the future? Where would the value be? Because truly, everyone's first reaction was "okay, it's over."

As I progressed in my thoughts, I realized that what makes an artwork meaningful to me is the story of the human behind it. AI can today redraw hundreds of Incal frames and produce stories that never existed. But what makes me happy isn't just the drawings; it's diving into a world that came from the mind and pen of a genius like Moebius. Thinking about the personal history that enabled him to create such worlds. Exploring the limits of a human mind's imagination. And looking with admiration at what I estimate to be tens of thousands of hours of mastery.

After everyone's initial amazement wore off and after experiencing LLM shallowness for a while, we've started returning to our dear brains. This doesn't mean we've stopped using genAI. Rather, understanding what is valuable and why has become the priority.

Within the creative industry, some declared total war from day one, arguing (rightfully) that image generators are built entirely on theft, while others joined this war only after the dust settled. Various creative industry groups are rebelling against AI, putting "Made by Human" or “Not by AI” labels on their works. While I totally understand the need for a label, I find it as concerning as not putting it. Because as the author of these lines, I feel the need to state that the output isn't from AI but is the result of extensive human labor. As for why I find it controversial: briefly, labeling human labor feels like conceding defeat before the battle even begins.

Yes I know made by AI labels or company logos are on the generated images, but have you ever watched a boomer scrolling on instagram? No further questions, Your Honor.

Whether we label or not, not just next year but over the next 5-10 years, we'll see human touch being valued more than ever.

So, Brain First > AI Next, and carry on.

2. My Take on Longer, Deeper Tasks

We've been experiencing AI chatbots and coding tools completing multi-step tasks through what's called "reasoning" flows for a while now. Led by coding AI startups, the ecosystem is working on even longer tasks. For example, during the day we work interactively with a coding tool, then shut down the computer and go to sleep at night. The question being asked is: Why shouldn't they keep working through the night? Currently, developing a product or feature depends on hundreds of ping-pong messages with AI. According to this optimistic proposal, after a more detailed planning and understanding session, it will be possible for AI to work alone for hours through the night and finish the entire product all by itself.

To understand how this might be possible and for whom it might be suitable, let's divide the work into two. Setting aside writing tasks, I'm focusing on technical work where multi-step work really comes to the fore. On one side is VibeCoding, on the other side is AI as a code helper.

VibeCoding describes non-technical people developing a product using only natural language. As a half-technical vibecoder myself, I should note that experimentation is at the core of this activity. Vibecoding is a playful one. It's a genuinely fun journey toward producing an output, co-working with the machine through trial and error. I wouldn't want to leave this journey to AI, and I couldn't anyway. Because I don't even know what the end result will look like.

Where longer multi-step tasks will really be useful is in startups or organizations that have a development team working on legacy code, closing dozens of Jira tickets every day. Here, chain operations are executed, each of which an AI agent could do. A request comes from a user, the request is analyzed, it becomes a bug report or feature request, an analyst/PM opens a ticket with requirements and acceptance criteria, the ticket falls to a developer according to priority, the developer first reviews it, returns to the PM with questions or gets to work, then tests and so on. The entirety of the work I'm describing can completely move to AI with very little human oversight. Cursor proposing solutions to ticket resolution, Claude integrating into Slack, they have already started to sneak into the flow.

By the end of 2026, a developer with that type of workflow might just be opening their computer in the morning, reviewing the code AI developed, giving feedback, and pushing to production.

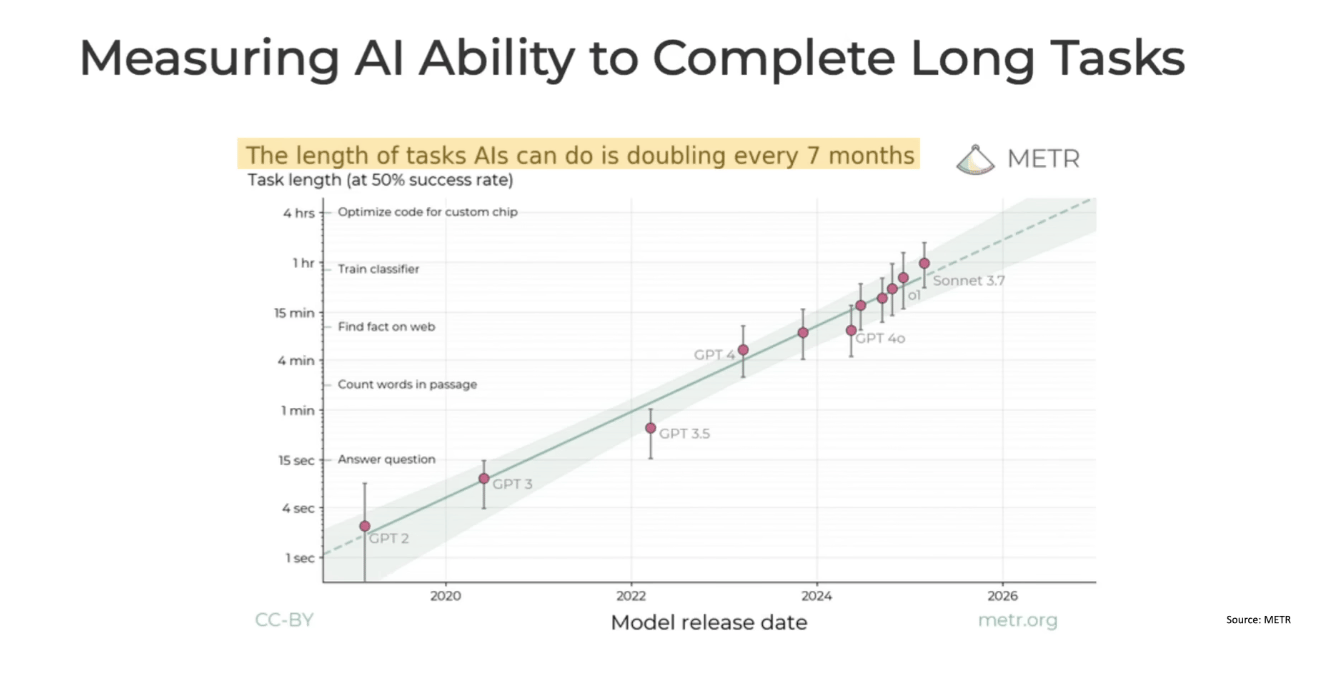

According to METR's research, which Yoshua Bengio presented in his TED Talk, the length of tasks AIs can complete is doubling every 7 months. From answering simple questions in seconds to optimizing code for hours. This exponential growth in AI agency sounds exciting for productivity, but Bengio raises a critical concern: as these systems take on longer, more autonomous tasks, our ability to oversee and correct them diminishes. The same capability that lets AI work through the night also means more time for things to go wrong without human intervention.

3. Concerning Rise of AI Generated Code Security

As a builder, I want to get to one of the issues that concerns me most as I prepare to launch a product. To explain the topic, I'll use my work with E., a dear friend who's a guru old-school developer, as an example. E. and I have worked together for years. To convey how old school he is, let me mention he always writes pure PHP and uses his laptop almost without touching a mouse. Whenever I come with an innovative feature idea, he usually responds with the most horrifying security vulnerability scenario possible. As a forward-thinking person, this annoys you at first, but you also know he's absolutely right. At the end, we argue and find a middle ground. A secure and innovative feature is launched.

Globally, AI adoption is still strongest on programming. VibeCoders don't have a developer friend like E. beside them, and this adoption has brought new dangers with it. As companies quickly push thousands of products and features using AI tools, code bases with security vulnerabilities have grown exponentially. Add thousands of products created with zero technical experience or oversight, and you get a new market focusing solely on vibe coding security.

Millions of developers will continue adding trillions of lines of code to their products every day. An app with a couple thousand users having security vulnerabilities might not be a big issue. But when companies providing serious infrastructure develop features the same way, the problem gets serious. On top of that, as agents start acting more and more on their own, developers might delegate even more to them.

In 2026 and beyond, one of these bugs we don't yet know exist will very likely cause new headaches for the world.

So vibe code or not, secure and test before you release.

4. Is Enterprise's 95% ROI Failure Real?

Let's continue with another of 2025's headline-grabbing research findings. The executive summary of the research published by MIT NANDA opens with stating that despite $30-40 billion in AI investment in enterprise, 95% haven't seen return on investment (literally the first sentence btw). Since the data was coming from an MIT-ish organisation, the striking result was jumped on by all news channels, generating hundreds of headlines and thousands of shares. However, there are serious debates about the research's scientific validity and methodology.

First, let me note that the organization using the licenced MIT name is a project group called NANDA (Networked AI Agents in Decentralized Architecture) that develops AI agents. As Sean Goedecke examined comparatively with other research in his post, how the report reached this conclusion is quite controversial. First, as Goedecke and others noted, the 95% figure cannot be reached by totaling any of the tables and numbers in the report. The 5% figure is mentioned in another sentence in different contexts, but there's no foundation for this bold claim.

According to McKinsey's “State of AI” research, participants report use-case-level cost and revenue benefits, and 64% say that AI is enabling their innovation. However, just 39% report EBIT impact at the enterprise level.

In summary, because there will be different evaluations about how an AI project differs from any software initiative and how success or ROI can be measured in all these, rather than taking them as concrete results, we can use them as a base and rely on our own real world observations. Real enterprise adoption, meanwhile, is all employees having ChatGPT write all their emails, marketing materials, presentations, and even contracts. That’s 100% product market fit right there.

According to Natalia Quintero, Head of Consulting at Every, who conducted more actionable study by talking to nearly a hundred companies, the failure of chasing AI is coming from expecting a high level of AI fluency from everyone. The "we need to do something with this" mentality instead of developing solutions to existing problems has brought us here. Again according to Quintero, it ultimately comes down to one champion within the organization truly solving something, setting an example, and creating impact by sparking curiosity. This impact then spreads within the company as everyone wants to do something similar.

Enterprise has always been slow in adapting to all kinds of change. It may take years to truly restructure everything from scratch. Which brings us to the next topic.

5. Silos Cracking. Finally!

I want to start this section with a teaser from a future nodemode essay. Despite the people inside being super educated and super smart, as an entity itself enterprise is dumb. Sorry. I said it. We service designers, spent years trying to add intelligence to corporate organizations. The way we did this, like enabling different neurons in the brain to discover new pathways and patterns, was trying to eliminate silos so the entire company would start working in harmony, by sharing bits of information with each other.

Despite achieving partial success through years of struggle, AI has started to change corporate intelligence through top-down interventions, pushing roles to merge. I'm not just talking about designer-engineer-product roles. According to this HBR article, companies that don't know what to do with AI or how to handle it have found the solution in rethinking human and talent issues. You know that 'we're all going to lose our jobs' topic? Well, it's not exactly like that although data shows entry-level jobs are starting to take hits. HBR gives the example of Moderna, which merged the CHRO and CTO roles. We'll see what success Moderna achieves from this in the future. According to IBM's CIO Ann Funai, the traditional function-by-function approach is 'starting to crack.' And to that, I just want to say finally!

6. SF is so Back!

And this might not be good for the rest of humanity.

The "San Francisco is done” talk that started even way before the pandemic, became a reality when the city's energy was completely drained during those years. City’s security was in serious crisis. The 2023 stabbing death of the CashApp CEO in Rincon Hill was the final nail in the coffin. The displaced startup energy searched alternative places like Austin and Miami. But the AI fury that started around the same time said "hold my context window" for SF.

Fast forward to today, SF is experiencing a full-blown AI Gold Rush. Everyone agrees the city has returned to its former vibrant state. But this time there's an important unifying difference from the old ecosystem movement: pretty much the entire city is working on AI. And this doesn't bode well for the rest of society. The AI Gold Rush is producing even crazier startup stories than before. Those reaching million ARR in months not years, those reaching 100 million in revenue with just 3 people... Alongside these stories, all the big players are in the AGI race. Right now, everyone is continuing to develop their models in a storm with no clear destination, feeling both excitement and anxiety. Despite the signatures of many researchers and scientists on the view that AI will bring trouble upon us, nobody can exit the competition they're in. The prevailing view is "if we stop, they'll do it anyway." The same applies to regulations. "If we comply, they'll find loopholes in regulations and keep moving in one direction anyway." Yoshua Bengio, godfather of AI, said in a Ted Talk this summer: "A sandwich has more regulation than AI."

The Guardian's special report followed the city's energy along CalTrain, the Valley's famous commute route. The reporters observed a community of entrepreneurs and developers hustling to apply AI to real-world money-making ideas, with zero appetite for any brakes on AGI progress. 'We don't do that in Silicon Valley,' said one developer. 'If everyone here stops, it still keeps going,' said another. 'It's really hard to opt out now.'

In my previous post, I raised a question about why we're building a future nobody wants. That quote answers why.

But let's try to focus on the spirit of the season anyway, shall we?

Let's Close The Year 🎄

I want to close the year and the article positively from a builder/creator perspective. From 20 years ago when I was trying to center a table with CSS for hours, thinking "there must be a sensible way to do this" or "I wish the computer understood what I'm trying to do," to now living in an era where the computer understands my intention in one go, I'm experiencing genuine fascination. I don't think the effect can be fully understood without spending 2 hours developing with Lovable, Cursor, or Claude Code. I'm incredibly excited that LLM-based coding tools have removed barriers that were once set by my skill limitations, along with the mental blocks that came with them. I know this is true for many people like me.

But my best creative outputs still come when I step away from the computer and work with pen and paper (to be honest, usually iPad and Pencil but you get the idea), or even further away, in moments when I'm alone with myself travelling. For this reason, especially for 2026 and beyond, I'm aiming for more offline time, more reading physical books, and more writing.

Wishing everyone who loves to create, a healthy and fun year ahead.

Node Mode [Off][ ]

Peace,

Aydıncan.

Reply